👋 Hey friends,

Here’s what I’ve got in store for you this week:

Another snippet from The Backend Lowdown

Ralph Kit - a starter kit for using the ralph loop that I’ve been using

News Nug - a site I launched to keep up with AI news

Upcoming tip: invalid index gotcha

Let’s get into it 👇

Every newsletter will include a snippet from my book in progress, The Backend Lowdown, available for $5 right now on Gumroad!

Get The Backend Lowdown →Chapter 2: Indexing, Query Planning, and Performance

How To Use Explain

When you prepend a query with EXPLAIN, the database doesn't execute it - instead, it shows you the execution plan: a step-by-step blueprint of how it would run the query. Each line in the plan represents an operation the database will perform, in order, from the innermost data access to the final output.

Here's a basic example:

EXPLAIN SELECT * FROM users WHERE email = '[email protected]';The output might look something like:

Index Scan using index_users_on_email on users (cost=0.29..8.30 rows=1 width=100)

Index Cond: (email = '[email protected]')Let's break it down.

The Plan Is a Tree

Even though it's shown top-to-bottom, the plan is actually a tree of operations - each one feeding into the next. For simple queries, it's often just one step. For complex joins or subqueries, you'll see multiple nested levels.

Key Things to Look At

Scan Type

This tells you how the database is accessing the data:Seq Scanmeans a full-table scan - often slow on large tables.Index ScanorIndex Only Scanmeans it's using an index - usually good.Bitmap Heap Scanmeans it's using part of an index, but still hitting the heap.

Index Used

Shown in lines likeIndex Scan using index_users_on_email. Confirms which index the planner chose.Filter or Condition

Index Cond:orFilter:shows the condition being applied. Sometimes, an index exists but isn't used because the condition doesn't match it exactly.Cost

Displayed ascost=START..END. It's not in seconds - it's a relative estimate of work, useful for comparing steps. Lower is better.Rows Estimate

The planner's guess of how many rows this step will produce. If it's wildly off from reality, your statistics may be stale.

EXPLAIN vs EXPLAIN ANALYZE

It's important to understand the difference:

EXPLAINshows what the database plans to doEXPLAIN ANALYZEactually runs the query and shows what really happened

Example:

EXPLAIN ANALYZE SELECT * FROM users WHERE email = '[email protected]';This gives you:

Actual timing (how long each step took)

Actual row counts (vs estimated)

Whether the plan was efficient or not

EXPLAIN ANALYZE executes the query - which means it can write to or delete data if used with INSERT, UPDATE, or DELETE. Be careful, especially on production. Even if you're running a SELECT statement, it could still impact the database's performance if the query is slow!

Ralph Kit: Your Ralph Loop Starter Kit

I recently posted about the Ralph Wiggum loop and got a great response, so I wanted to share the starter kit I’ve been using.

The Ralph loop is a simple pattern for long-running LLM agent work: give the agent a prompt doc and a tasklist doc, then loop. On each invocation, it reads the prompt, finds the last unchecked task, completes it, marks it done, and repeats.

If you want to try it yourself, here it is:

Fork it, tweak it, make it yours.

A few things I like about it:

The Markdown checklist is the state. If it crashes mid-run, just start it again and it resumes from the next

- [ ]~320 lines of bash, with zero dependencies beyond the Claude CLI

Inline per-item tags like

@opus,@sonnet,@haiku,@turns:N, and@prompt:pathA built-in evaluator pattern that can review prior work and insert remediation tasks when it finds bugs

Token-efficient by design: only the current task is injected, not the full checklist

Verifies the agent actually checked the item off, and halts on

> BLOCKED:notesPer-iteration git commits, so your history reads like the checklist and bad steps are easy to revert

Live tool-call streaming, cost-per-turn output, and a

latest.logsymlink fortail -fHard turn limits per iteration, so runaway agents don’t burn through budget

--dry-runmode to validate everything before making a single API call

The whole idea is simple: the checklist is the database, the agent is the worker, and git is the audit log.

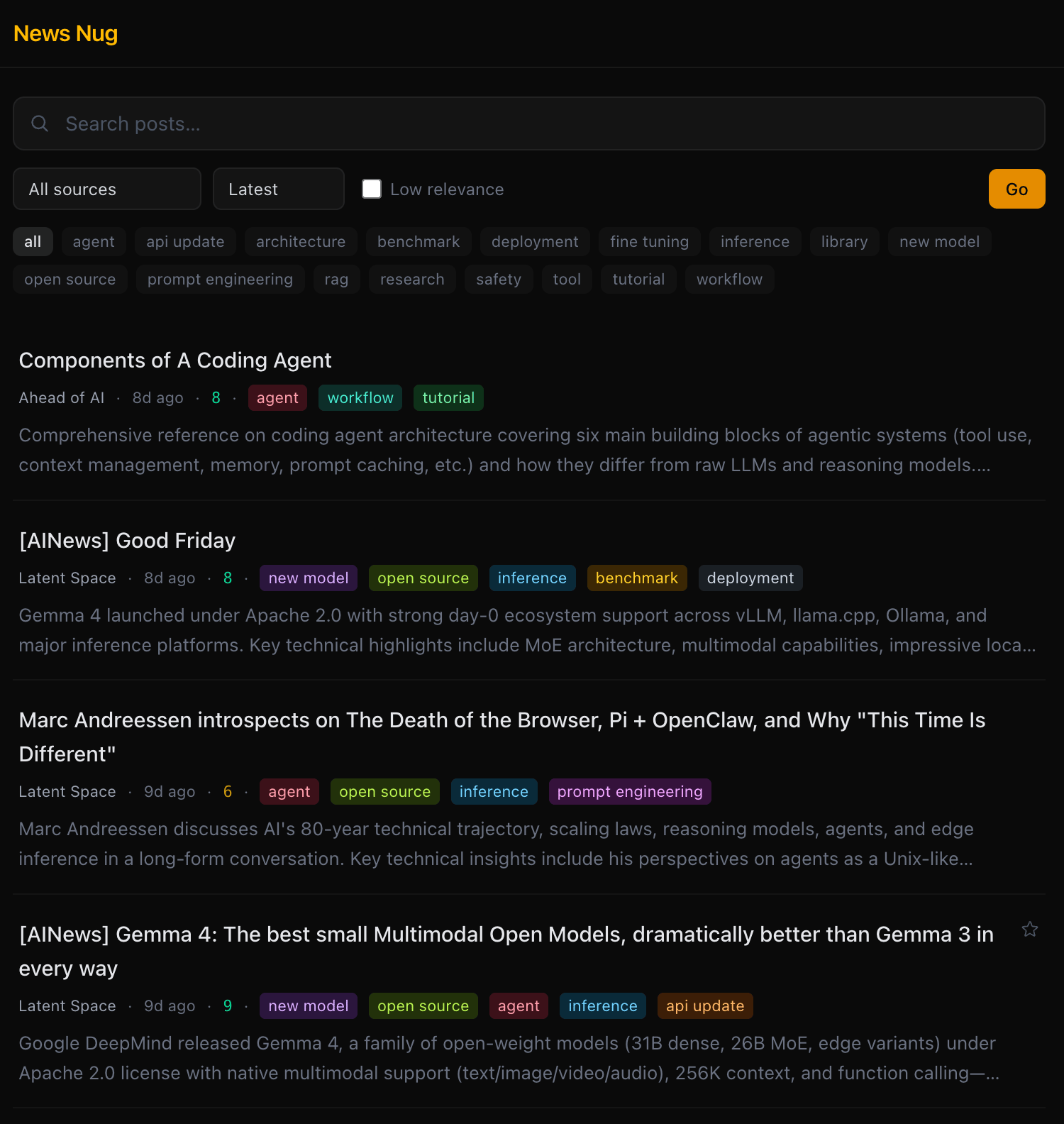

News Nug: Stay up to date with AI

AI is already changing how a lot of us work, and the pace is ridiculous. I wanted a way to keep up without scrolling 10 different sites every day, so I built this:

News Nug is an AI news reader built for developers.

It pulls posts into a clean single-column feed from ~15 sources I actually care about: Anthropic, OpenAI, DeepMind, Hugging Face, Simon Willison, r/LocalLLaMA, Hacker News, GitHub Trending, and more.

Then it does a few useful things on top:

Relevance scoring: every post gets a 1–10 score from Claude Haiku based on how relevant it is for SWE-with-AI work, and low-signal stuff is hidden by default

SWE-focused summaries: each post gets a short 2–3 sentence summary so you can quickly tell why it matters

Auto-tagging: posts are tagged into buckets like

new-model,tool,library,workflow,research,benchmark, andagentFast filtering: filter by source, tag, relevance, bookmarked, or search across titles and summaries

Read state + bookmarks: clicked posts auto-dim, and you can star the ones you want to come back to later

Minimal UI: dark mode, mobile-friendly, and intentionally built to be easy to scan

There’s also some backend stuff I’m happy with:

scheduled ingestion with per-source fetch frequencies

multiple fetcher types: RSS/Atom, HN Algolia API, GitHub search API, plus scraping for sites without feeds

an admin panel for managing sources, triggering fetches, deleting posts, and regenerating summaries

The goal is simple: less noise, more signal, and a faster way to keep up with the AI updates that actually matter for developers.

News Nug

Upcoming Tip: Invalid Index Gotcha

I’ll be doing a post about this next week, but wanted to share a preview:

In Postgres, a failed concurrent index build can leave behind an invalid index. The nasty part is that it may not help your queries at all, but it can still add overhead to writes. So if CPU is elevated and the usual suspects are not obvious, it is worth checking for invalid indexes lingering in the background.

Stay tuned for the post!

That’s a wrap for this week. If something here made your day smoother, feel free to reply and tell me about it. And if you think a friend or teammate would enjoy this too, I’d be grateful if you shared it with them.

Until next time,

Steven